- ubuntu12.04环境下使用kvm ioctl接口实现最简单的虚拟机

- Ubuntu 通过无线网络安装Ubuntu Server启动系统后连接无线网络的方法

- 在Ubuntu上搭建网桥的方法

- ubuntu 虚拟机上网方式及相关配置详解

CFSDN坚持开源创造价值,我们致力于搭建一个资源共享平台,让每一个IT人在这里找到属于你的精彩世界.

这篇CFSDN的博客文章Python 通过xpath属性爬取豆瓣热映的电影信息由作者收集整理,如果你对这篇文章有兴趣,记得点赞哟.

声明一下:本文主要是研究使用,没有别的用途.

GitHub仓库地址:github项目仓库 。

主要爬取页面为:https://movie.douban.com/cinema/nowplaying/nanjing/ 。

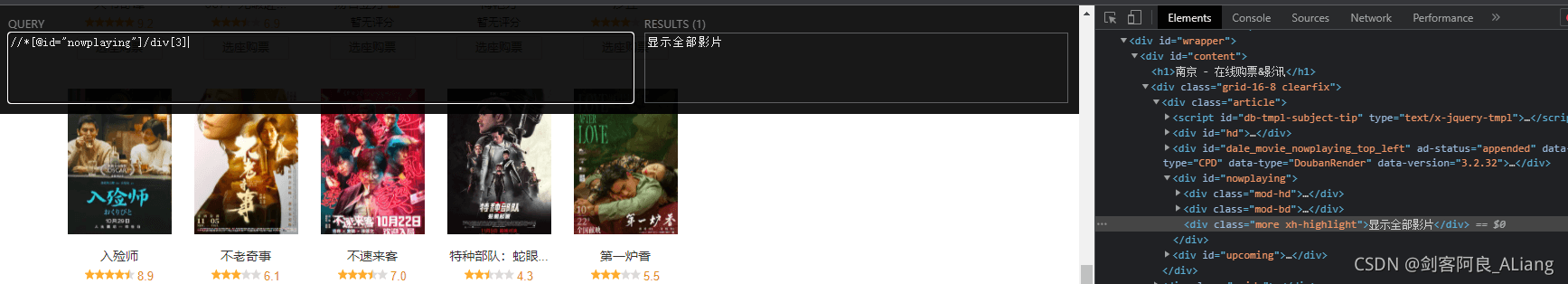

至于后面的地区,可以按照自己的需要改一下,不过多赘述了。页面需要点击一下展开全部影片,才能显示全部内容,不然只有15部。所以我们使用selenium的时候,需要加一个打开页面后的点击逻辑。页面图如下:

通过F12展开的源码,用xpath helper工具验证一下右键复制下来的xpath路径.

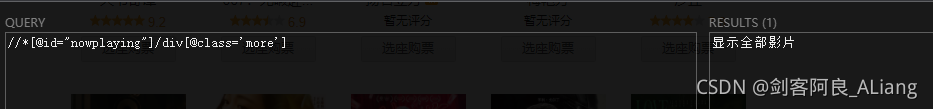

为了避免布局调整导致找不到,我把xpath改为通过class名获取.

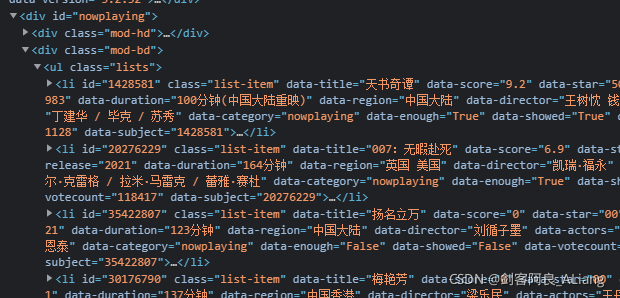

然后看看每个影片的信息.

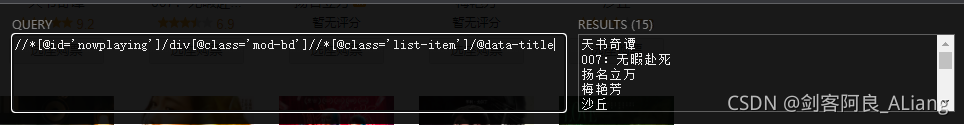

分析一下,是不是可以通过nowplaying的div,作为根节点,然后获取下面class为list-item的节点,里面的属性就是我们要的内容.

没什么问题,那么就按照这个思路开始创建项目编码吧.

。

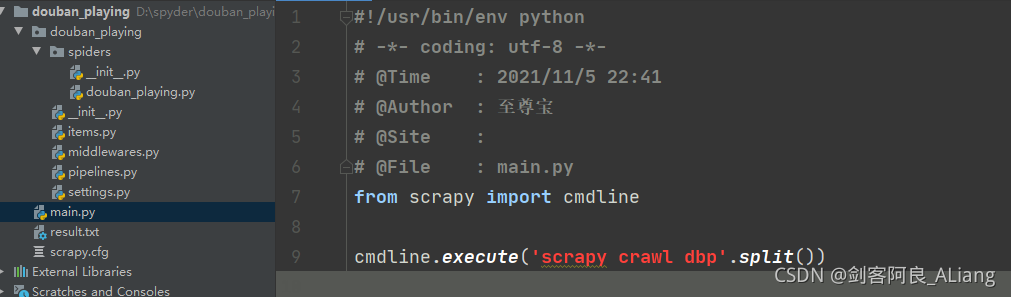

创建一个较douban_playing的项目,使用scrapy命令.

scrapy startproject douban_playing 。

定义电影信息实体.

# Define here the models for your scraped items## See documentation in:# https://docs.scrapy.org/en/latest/topics/items.htmlimport scrapyclass DoubanPlayingItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() # 电影名 # 电影分数 score = scrapy.Field() # 电影发行年份 release = scrapy.Field() # 电影时长 duration = scrapy.Field() # 地区 region = scrapy.Field() # 电影导演 director = scrapy.Field() # 电影主演 actors = scrapy.Field()

主要是点击展开全部影片,需要加一段代码.

# Define here the models for your spider middleware## See documentation in:# https://docs.scrapy.org/en/latest/topics/spider-middleware.htmlimport timefrom scrapy import signals# useful for handling different item types with a single interfacefrom itemadapter import is_item, ItemAdapterfrom scrapy.http import HtmlResponsefrom selenium.common.exceptions import TimeoutExceptionclass DoubanPlayingSpiderMiddleware: # Not all methods need to be defined. If a method is not defined, # scrapy acts as if the spider middleware does not modify the # passed objects. @classmethod def from_crawler(cls, crawler): # This method is used by Scrapy to create your spiders. s = cls() crawler.signals.connect(s.spider_opened, signal=signals.spider_opened) return s def process_spider_input(self, response, spider): # Called for each response that goes through the spider # middleware and into the spider. # Should return None or raise an exception. return None def process_spider_output(self, response, result, spider): # Called with the results returned from the Spider, after # it has processed the response. # Must return an iterable of Request, or item objects. for i in result: yield i def process_spider_exception(self, response, exception, spider): # Called when a spider or process_spider_input() method # (from other spider middleware) raises an exception. # Should return either None or an iterable of Request or item objects. pass def process_start_requests(self, start_requests, spider): # Called with the start requests of the spider, and works # similarly to the process_spider_output() method, except # that it doesn't have a response associated. # Must return only requests (not items). for r in start_requests: yield r def spider_opened(self, spider): spider.logger.info('Spider opened: %s' % spider.name)class DoubanPlayingDownloaderMiddleware: # Not all methods need to be defined. If a method is not defined, # scrapy acts as if the downloader middleware does not modify the # passed objects. @classmethod def from_crawler(cls, crawler): # This method is used by Scrapy to create your spiders. s = cls() crawler.signals.connect(s.spider_opened, signal=signals.spider_opened) return s def process_request(self, request, spider): # Called for each request that goes through the downloader # middleware. # Must either: # - return None: continue processing this request # - or return a Response object # - or return a Request object # - or raise IgnoreRequest: process_exception() methods of # installed downloader middleware will be called # return None try: spider.browser.get(request.url) spider.browser.maximize_window() time.sleep(2) spider.browser.find_element_by_xpath("//*[@id='nowplaying']/div[@class='more']").click() # ActionChains(spider.browser).click(searchButtonElement) time.sleep(5) return HtmlResponse(url=spider.browser.current_url, body=spider.browser.page_source, encoding="utf-8", request=request) except TimeoutException as e: print('超时异常:{}'.format(e)) spider.browser.execute_script('window.stop()') finally: spider.browser.close() def process_response(self, request, response, spider): # Called with the response returned from the downloader. # Must either; # - return a Response object # - return a Request object # - or raise IgnoreRequest return response def process_exception(self, request, exception, spider): # Called when a download handler or a process_request() # (from other downloader middleware) raises an exception. # Must either: # - return None: continue processing this exception # - return a Response object: stops process_exception() chain # - return a Request object: stops process_exception() chain pass def spider_opened(self, spider): spider.logger.info('Spider opened: %s' % spider.name)

按照属性名,我们取出所有的影片信息。注意取出属性的写法.

#!/user/bin/env python# coding=utf-8"""@project : douban_playing@author : huyi@file : douban_playing.py@ide : PyCharm@time : 2021-11-10 16:31:23"""import scrapyfrom selenium import webdriverfrom selenium.webdriver.chrome.options import Optionsfrom douban_playing.items import DoubanPlayingItemclass DoubanPlayingSpider(scrapy.Spider): name = 'dbp' # allowed_domains = ['blog.csdn.net'] start_urls = ['https://movie.douban.com/cinema/nowplaying/nanjing/'] nowplaying = "//*[@id='nowplaying']/div[@class='mod-bd']//*[@class='list-item']/@{}" properties = ['data-title', 'data-score', 'data-release', 'data-duration', 'data-region', 'data-director', 'data-actors'] def __init__(self): chrome_options = Options() chrome_options.add_argument('--headless') # 使用无头谷歌浏览器模式 chrome_options.add_argument('--disable-gpu') chrome_options.add_argument('--no-sandbox') self.browser = webdriver.Chrome(chrome_options=chrome_options, executable_path="E:\\chromedriver_win32\\chromedriver.exe") self.browser.set_page_load_timeout(30) def parse(self, response, **kwargs): titles = response.xpath(self.nowplaying.format(self.properties[0])).extract() scores = response.xpath(self.nowplaying.format(self.properties[1])).extract() releases = response.xpath(self.nowplaying.format(self.properties[2])).extract() durations = response.xpath(self.nowplaying.format(self.properties[3])).extract() regions = response.xpath(self.nowplaying.format(self.properties[4])).extract() directors = response.xpath(self.nowplaying.format(self.properties[5])).extract() actors = response.xpath(self.nowplaying.format(self.properties[6])).extract() for x in range(len(titles)): item = DoubanPlayingItem() item['title'] = titles[x] item['score'] = scores[x] item['release'] = releases[x] item['duration'] = durations[x] item['region'] = regions[x] item['director'] = directors[x] item['actors'] = actors[x] yield item

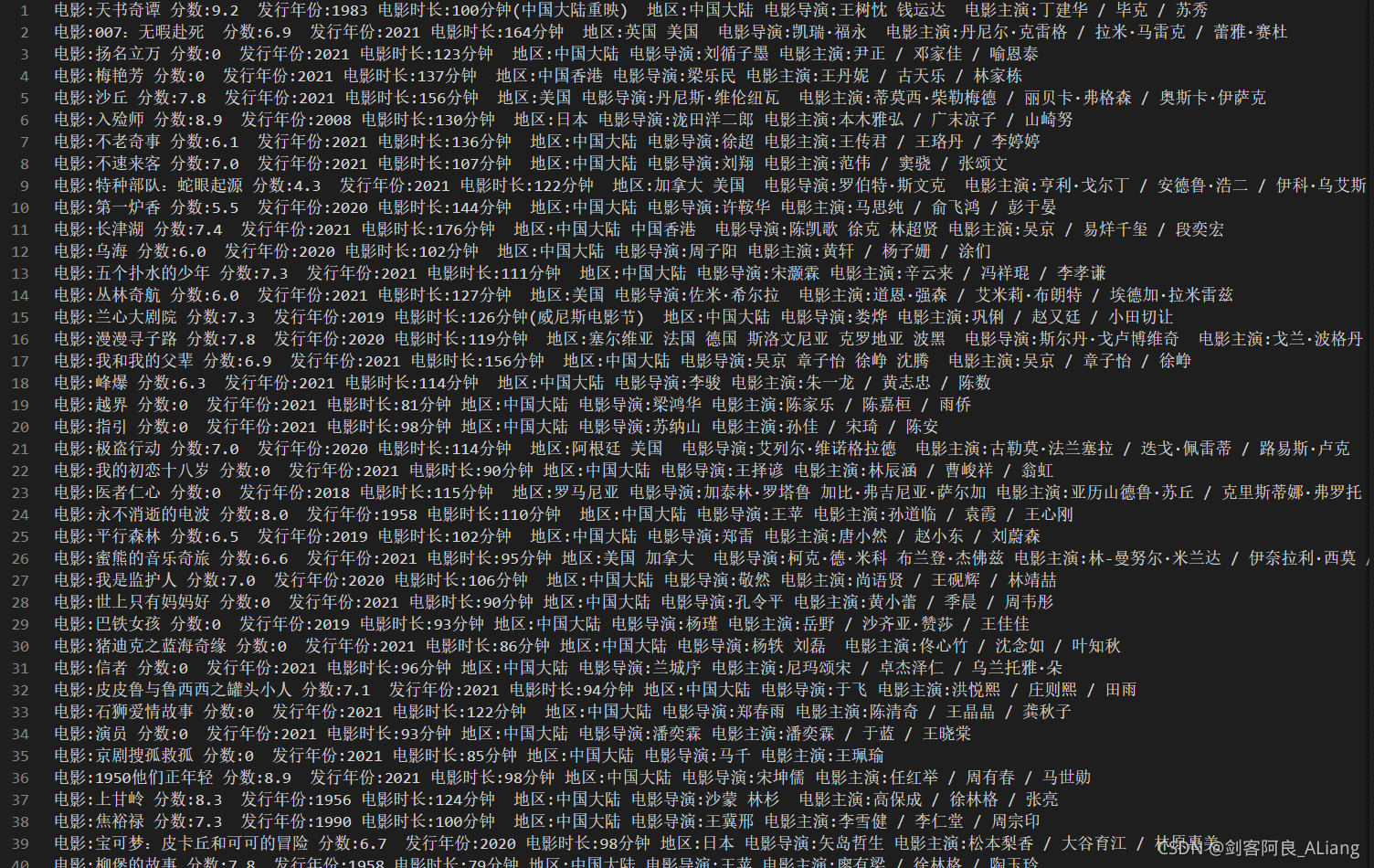

还是老样子,把取出的电影数据按照格式输出在文本中.

# Define your item pipelines here## Don't forget to add your pipeline to the ITEM_PIPELINES setting# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html# useful for handling different item types with a single interfacefrom itemadapter import ItemAdapterclass DoubanPlayingPipeline: def __init__(self): self.file = open('result.txt', 'w', encoding='utf-8') def process_item(self, item, spider): self.file.write( "电影:{}\t分数:{}\t发行年份:{}\t电影时长:{}\t地区:{}\t电影导演:{}\t电影主演:{}\n".format( item['title'], item['score'], item['release'], item['duration'], item['region'], item['director'], item['actors'])) return item def close_spider(self, spider): self.file.close()

都是一些常规的,放开几个默认配置就行.

# Scrapy settings for douban_playing project## For simplicity, this file contains only settings considered important or# commonly used. You can find more settings consulting the documentation:## https://docs.scrapy.org/en/latest/topics/settings.html# https://docs.scrapy.org/en/latest/topics/downloader-middleware.html# https://docs.scrapy.org/en/latest/topics/spider-middleware.htmlBOT_NAME = 'douban_playing'SPIDER_MODULES = ['douban_playing.spiders']NEWSPIDER_MODULE = 'douban_playing.spiders'# Crawl responsibly by identifying yourself (and your website) on the user-agent#USER_AGENT = 'douban_playing (+http://www.yourdomain.com)'USER_AGENT = 'Mozilla/5.0'# Obey robots.txt rulesROBOTSTXT_OBEY = False# Configure maximum concurrent requests performed by Scrapy (default: 16)#CONCURRENT_REQUESTS = 32# Configure a delay for requests for the same website (default: 0)# See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay# See also autothrottle settings and docs#DOWNLOAD_DELAY = 3# The download delay setting will honor only one of:#CONCURRENT_REQUESTS_PER_DOMAIN = 16#CONCURRENT_REQUESTS_PER_IP = 16# Disable cookies (enabled by default)COOKIES_ENABLED = False# Disable Telnet Console (enabled by default)#TELNETCONSOLE_ENABLED = False# Override the default request headers:DEFAULT_REQUEST_HEADERS = { 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', 'Accept-Language': 'en', 'User-Agent': 'Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.94 Safari/537.36'}# Enable or disable spider middlewares# See https://docs.scrapy.org/en/latest/topics/spider-middleware.htmlSPIDER_MIDDLEWARES = { 'douban_playing.middlewares.DoubanPlayingSpiderMiddleware': 543,}# Enable or disable downloader middlewares# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.htmlDOWNLOADER_MIDDLEWARES = { 'douban_playing.middlewares.DoubanPlayingDownloaderMiddleware': 543,}# Enable or disable extensions# See https://docs.scrapy.org/en/latest/topics/extensions.html#EXTENSIONS = {# 'scrapy.extensions.telnet.TelnetConsole': None,#}# Configure item pipelines# See https://docs.scrapy.org/en/latest/topics/item-pipeline.htmlITEM_PIPELINES = { 'douban_playing.pipelines.DoubanPlayingPipeline': 300,}# Enable and configure the AutoThrottle extension (disabled by default)# See https://docs.scrapy.org/en/latest/topics/autothrottle.html#AUTOTHROTTLE_ENABLED = True# The initial download delay#AUTOTHROTTLE_START_DELAY = 5# The maximum download delay to be set in case of high latencies#AUTOTHROTTLE_MAX_DELAY = 60# The average number of requests Scrapy should be sending in parallel to# each remote server#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0# Enable showing throttling stats for every response received:#AUTOTHROTTLE_DEBUG = False# Enable and configure HTTP caching (disabled by default)# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings#HTTPCACHE_ENABLED = True#HTTPCACHE_EXPIRATION_SECS = 0#HTTPCACHE_DIR = 'httpcache'#HTTPCACHE_IGNORE_HTTP_CODES = []#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

还是老样子,不直接使用scrapy命令,构造一个py执行cmd。注意该py的位置.

看一下执行后的结果.

完美!!! 。

。

最近都在写一些爬虫的案例,也是边学习边摸索,把一些实现过程记录一下,也分享一下,等过段时间还可以回忆回忆.

分享:

情之一字,不知所起,不知所栖,不知所结,不知所解,不知所踪,不知所终。 ――《雪中悍刀行》 。

如果本文对你有用的话,请不要吝啬你的赞,谢谢! 。

以上就是Python 通过xpath属性爬取豆瓣热映的电影信息的详细内容,更多关于Python 爬虫豆瓣的资料请关注我其它相关文章! 。

原文链接:https://huyi-aliang.blog.csdn.net/article/details/121254579 。

最后此篇关于Python 通过xpath属性爬取豆瓣热映的电影信息的文章就讲到这里了,如果你想了解更多关于Python 通过xpath属性爬取豆瓣热映的电影信息的内容请搜索CFSDN的文章或继续浏览相关文章,希望大家以后支持我的博客! 。

03-25 05:52:15.329 8029-8042/com.mgh.radio W/MediaPlayerNative: info/warning (703, 0) 03-25 05:52:15

我一直在 Internet 上到处寻找关于 FrameworkElementFactory 类的适当文档,但我似乎找不到有关它的适当教程或有用信息。 请问对这个问题了解更多的人可以给我更多的信息吗?这

我需要知道一个线程在进入等待状态之前如何将其ID发送到另一个线程。我想传递一个带有其ID的变量,但我不知道该怎么做。 最佳答案 如果只有一个线程及其父线程,则可以使用全局变量,因为它们在所有线程之间共

我正在尝试制作一个程序,该程序可以读取命令行上的所有单词,然后将其打印在新行上,而我想要做的是这样的: Some text: hello 但是相反,我得到了这样的东西: Some text: Hell

我有一个连接到rabbitmq服务器的python程序。当该程序启动时,它连接良好。但是当rabbitmq服务器重新启动时,我的程序无法重新连接到它,并留下错误“Socket已关闭”(由kombu产生

我正在设置CI / CD管道。部署步骤运行以下命令: kubectl apply -f manifest.yml --namespace kubectl rollout status Deploym

关闭。这个问题需要多问focused 。目前不接受答案。 想要改进此问题吗?更新问题,使其仅关注一个问题 editing this post . 已关闭 4 年前。 Improve this ques

这是我在文件上运行 svn info 时输出的一部分: Last Changed Author: [user] Last Changed Rev: 269612 Last Changed Date:

所以我正在构建这个音乐应用程序,到目前为止它只扫描 SD 卡内的特定文件夹。这将返回路径,然后播放它们。 几个小时前我得知android系统中有一个媒体文件数据库所以 我想知道这个媒体文件数据库是否存

我正在绘制树形图,并且想知道如何绘制树类的相对百分比,即 A组=100 B地=30 C地=50 D 地 =20 然后,在图中,应该添加: A 组“50%” B 组“15%” 等在其“Group X”标

我正在构建一个社交网站,我想知道如何在用户首次登录时显示交互式教程和信息。比如只有在第一次登录时,用户才会被要求在他们的个人资料中填写更多信息。我怎样才能通过 php 和 mysql 实现这一点?例子

我是 java servlet 的新手。我研究了一些关于 servlet 的代码,但我真的很想知道更多基本的东西以及它是如何工作的。我只是想知道什么类型的 Material /内容可以从 java s

我想知道是否有办法为 user_id、sender_user_id 和 recipient_user_id 提供 name 信息来自 this fiddle 中的模式. 我现在唯一能想到的办法就是做这

这是我存储2个大学生信息的源代码。我想从输入中获取每个人的姓名、姓氏、ID 和 5 分,然后在输出中显示它们。我在输出中显示分数时遇到问题。 请帮忙 #include using namespace

假设我有一张带有条形图的图像,如下所示: 我想提取条形图和标签的值,除了训练 ML 模型之外,还有其他方法吗? 我有一堆图像,我为其生成了图表和一些描述。我目前正尝试仅从我能够做到的描述中提取信息,但

有没有办法从 GKTurnBasedParticipant 对象中检索玩家的名字?似乎除了根据类引用的难看的 playerID 之外,没有办法显示有关游戏玩家的相关信息。还是我遗漏了什么? 谢谢...

我有一个随机抛出“KeyNotFoundException”的 C# Silverlight 应用程序。我不知道找不到什么 key 。这让我想到了两个问题: KeyNotFoundException

本文实例为大家分享了ios获取本地音频文件的具体代码,供大家参考,具体内容如下 获取本地音频文件地址: ?

下面为大家介绍利用SQL查询语句获取Mysql数据库中表的表名,表描述、字段ID、字段名、数据类型、长度、精度、是否可以为null、默认值、是否自增、是否是主键、列描述 1、查询表信息(表名/表

问题 有没有办法获取代码中使用属性的位置,或声明成员变量的位置? 我不是在寻找解决此问题的方法,只是寻求一个简单的答案,无论这在技术上是否可行。 一些背景信息 我已经定义了一个属性,该属性使用提供给属

我是一名优秀的程序员,十分优秀!