- ubuntu12.04环境下使用kvm ioctl接口实现最简单的虚拟机

- Ubuntu 通过无线网络安装Ubuntu Server启动系统后连接无线网络的方法

- 在Ubuntu上搭建网桥的方法

- ubuntu 虚拟机上网方式及相关配置详解

CFSDN坚持开源创造价值,我们致力于搭建一个资源共享平台,让每一个IT人在这里找到属于你的精彩世界.

这篇CFSDN的博客文章一小时学会TensorFlow2之Fashion Mnist由作者收集整理,如果你对这篇文章有兴趣,记得点赞哟.

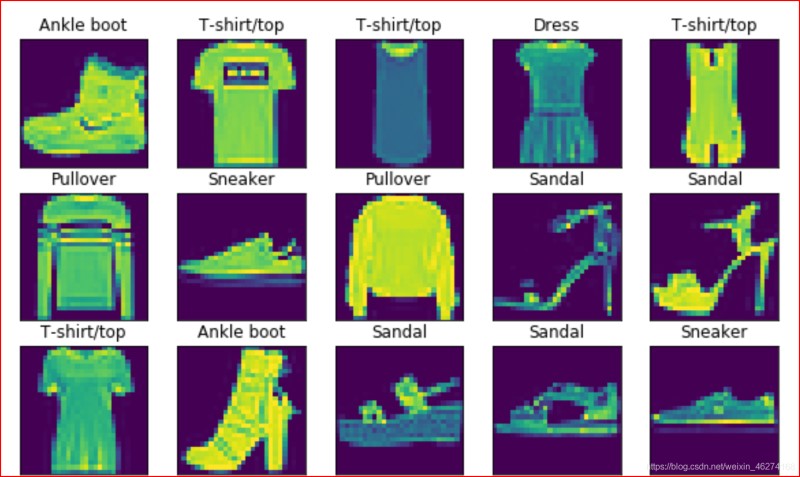

Fashion Mnist 是一个类似于 Mnist 的图像数据集. 涵盖 10 种类别的 7 万 (6 万训练集 + 1 万测试集) 个不同商品的图片. 。

。

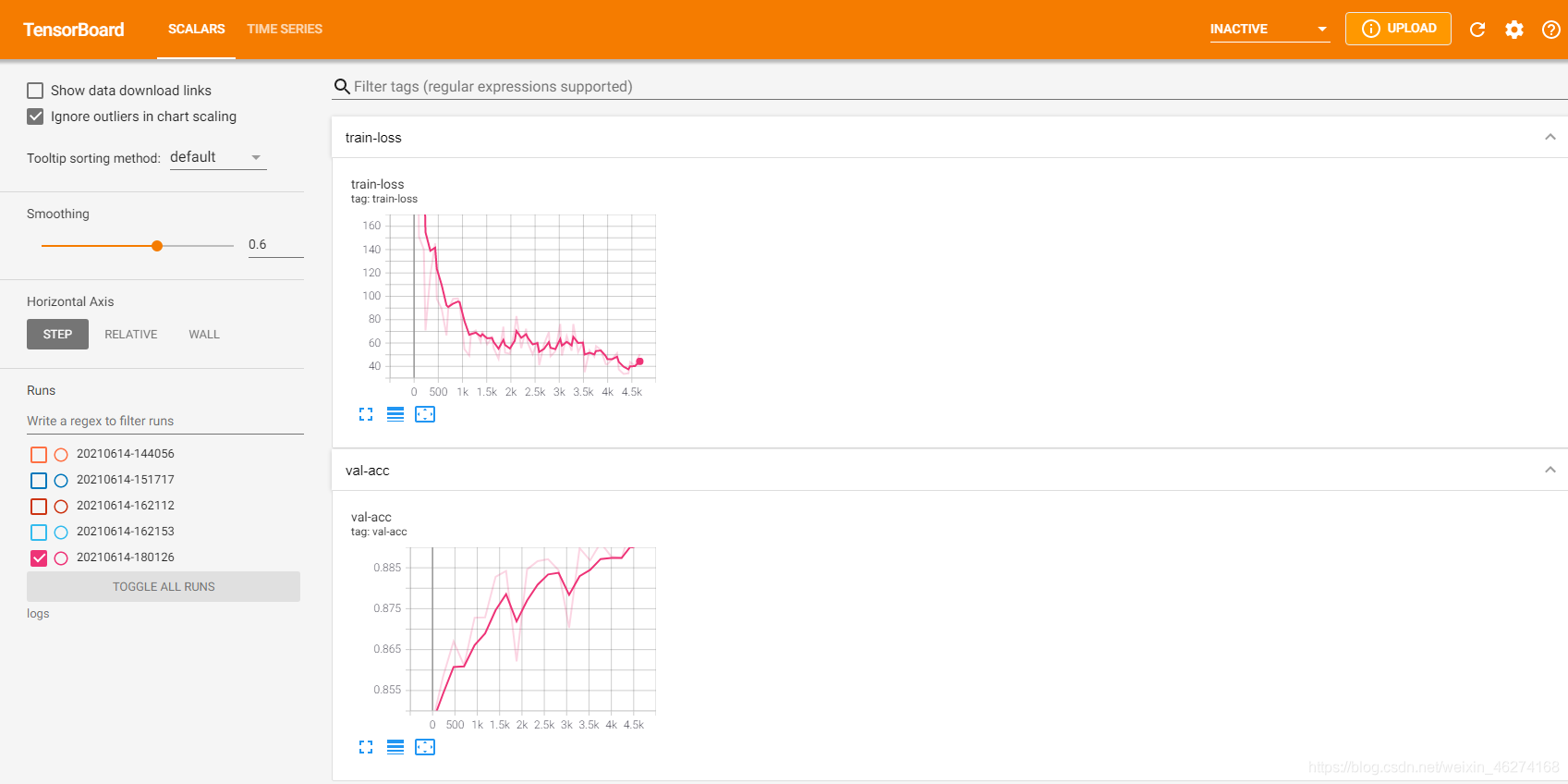

Tensorboard 是 tensorflow 的一个可视化工具. 。

我们可以通过tf.summary.create_file_writer(file_path)来创建一个新的 summary 实例. 。

例子

# 将当前时间作为子文件名current_time = datetime.datetime.now().strftime("%Y%m%d-%H%M%S")# 监听的文件的路径log_dir = 'logs/' + current_time# 创建writersummary_writer = tf.summary.create_file_writer(log_dir)

通过tf.summary.scalar我们可以向 summary 对象存入数据. 。

格式

tf.summary.scalar( name, data, step=None, description=None)

例子

with summary_writer.as_default(): tf.summary.scalar("train-loss", float(Cross_Entropy), step=step)

。

metrics.Mean()可以帮助我们计算平均数. 。

格式

tf.keras.metrics.Mean( name='mean', dtype=None)

例子

# 准确率表loss_meter = tf.keras.metrics.Mean()

格式

tf.keras.metrics.Accuracy( name='accuracy', dtype=None)

例子

# 损失表acc_meter = tf.keras.metrics.Accuracy()

我们可以通过update_state来实现变量更新, 通过rest_state来实现变量重置. 。

例如

# 跟新损失loss_meter.update_state(Cross_Entropy)# 重置loss_meter.reset_state()

。

def pre_process(x, y): """ 数据预处理 :param x: 特征值 :param y: 目标值 :return: 返回处理好的x, y """ # 转换x x = tf.cast(x, tf.float32) / 255 x = tf.reshape(x, [-1, 784]) # 转换y y = tf.cast(y, dtype=tf.int32) y = tf.one_hot(y, depth=10) return x, y

def get_data(): """ 获取数据 :return: 返回分批完的训练集和测试集 """ # 获取数据 (X_train, y_train), (X_test, y_test) = tf.keras.datasets.fashion_mnist.load_data() # 分割训练集 train_db = tf.data.Dataset.from_tensor_slices((X_train, y_train)).shuffle(60000, seed=0) train_db = train_db.batch(batch_size).map(pre_process) # 分割测试集 test_db = tf.data.Dataset.from_tensor_slices((X_test, y_test)).shuffle(10000, seed=0) test_db = test_db.batch(batch_size).map(pre_process) # 返回 return train_db, test_db

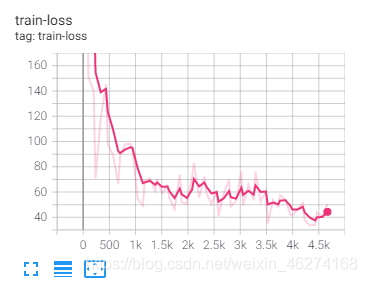

def train(epoch, train_db): """ 训练数据 :param train_db: 分批的数据集 :return: 无返回值 """ for step, (x, y) in enumerate(train_db): with tf.GradientTape() as tape: # 获取模型输出结果 logits = model(x) # 计算交叉熵 Cross_Entropy = tf.losses.categorical_crossentropy(y, logits, from_logits=True) Cross_Entropy = tf.reduce_sum(Cross_Entropy) # 跟新损失 loss_meter.update_state(Cross_Entropy) # 计算梯度 grads = tape.gradient(Cross_Entropy, model.trainable_variables) # 跟新参数 optimizer.apply_gradients(zip(grads, model.trainable_variables)) # 每100批调试输出一下误差 if step % 100 == 0: print("step:", step, "Cross_Entropy:", loss_meter.result().numpy()) # 重置 loss_meter.reset_state() # 可视化 with summary_writer.as_default(): tf.summary.scalar("train-loss", float(Cross_Entropy), step= epoch * 235 + step)

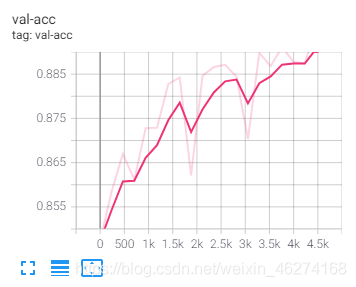

def test(epoch, test_db): """ 测试模型 :param epoch: 轮数 :param test_db: 分批的测试集 :return: 无返回值 """ # 重置 acc_meter.reset_state() for x, y in test_db: # 获取模型输出结果 logits = model(x) # 预测结果 pred = tf.argmax(logits, axis=1) # 从one_hot编码变回来 y = tf.argmax(y, axis=1) # 计算准确率 acc_meter.update_state(y, pred) # 调试输出 print("epoch:", epoch + 1, "Accuracy:", acc_meter.result().numpy() * 100, "%", ) # 可视化 with summary_writer.as_default(): tf.summary.scalar("val-acc", acc_meter.result().numpy(), step=epoch * 235)

def main(): """ 主函数 :return: 无返回值 """ # 获取数据 train_db, test_db = get_data() # 轮期 for epoch in range(iteration_num): train(epoch, train_db) test(epoch, test_db)

import datetimeimport tensorflow as tf# 定义超参数batch_size = 256 # 一次训练的样本数目learning_rate = 0.001 # 学习率iteration_num = 20 # 迭代次数# 优化器optimizer = tf.keras.optimizers.Adam(learning_rate=learning_rate)# 准确率表loss_meter = tf.keras.metrics.Mean()# 损失表acc_meter = tf.keras.metrics.Accuracy()# 可视化current_time = datetime.datetime.now().strftime("%Y%m%d-%H%M%S")log_dir = 'logs/' + current_timesummary_writer = tf.summary.create_file_writer(log_dir) # 创建writer# 模型model = tf.keras.Sequential([ tf.keras.layers.Dense(256, activation=tf.nn.relu), tf.keras.layers.Dense(128, activation=tf.nn.relu), tf.keras.layers.Dense(64, activation=tf.nn.relu), tf.keras.layers.Dense(32, activation=tf.nn.relu), tf.keras.layers.Dense(10)])# 调试输出summarymodel.build(input_shape=[None, 28 * 28])print(model.summary())def pre_process(x, y): """ 数据预处理 :param x: 特征值 :param y: 目标值 :return: 返回处理好的x, y """ # 转换x x = tf.cast(x, tf.float32) / 255 x = tf.reshape(x, [-1, 784]) # 转换y y = tf.cast(y, dtype=tf.int32) y = tf.one_hot(y, depth=10) return x, ydef get_data(): """ 获取数据 :return: 返回分批完的训练集和测试集 """ # 获取数据 (X_train, y_train), (X_test, y_test) = tf.keras.datasets.fashion_mnist.load_data() # 分割训练集 train_db = tf.data.Dataset.from_tensor_slices((X_train, y_train)).shuffle(60000, seed=0) train_db = train_db.batch(batch_size).map(pre_process) # 分割测试集 test_db = tf.data.Dataset.from_tensor_slices((X_test, y_test)).shuffle(10000, seed=0) test_db = test_db.batch(batch_size).map(pre_process) # 返回 return train_db, test_dbdef train(epoch, train_db): """ 训练数据 :param train_db: 分批的数据集 :return: 无返回值 """ for step, (x, y) in enumerate(train_db): with tf.GradientTape() as tape: # 获取模型输出结果 logits = model(x) # 计算交叉熵 Cross_Entropy = tf.losses.categorical_crossentropy(y, logits, from_logits=True) Cross_Entropy = tf.reduce_sum(Cross_Entropy) # 跟新损失 loss_meter.update_state(Cross_Entropy) # 计算梯度 grads = tape.gradient(Cross_Entropy, model.trainable_variables) # 跟新参数 optimizer.apply_gradients(zip(grads, model.trainable_variables)) # 每100批调试输出一下误差 if step % 100 == 0: print("step:", step, "Cross_Entropy:", loss_meter.result().numpy()) # 重置 loss_meter.reset_state() # 可视化 with summary_writer.as_default(): tf.summary.scalar("train-loss", float(Cross_Entropy), step=epoch * 235 + step)def test(epoch, test_db): """ 测试模型 :param epoch: 轮数 :param test_db: 分批的测试集 :return: 无返回值 """ # 重置 acc_meter.reset_state() for x, y in test_db: # 获取模型输出结果 logits = model(x) # 预测结果 pred = tf.argmax(logits, axis=1) # 从one_hot编码变回来 y = tf.argmax(y, axis=1) # 计算准确率 acc_meter.update_state(y, pred) # 调试输出 print("epoch:", epoch + 1, "Accuracy:", acc_meter.result().numpy() * 100, "%", ) # 可视化 with summary_writer.as_default(): tf.summary.scalar("val-acc", acc_meter.result().numpy(), step=epoch * 235)def main(): """ 主函数 :return: 无返回值 """ # 获取数据 train_db, test_db = get_data() # 轮期 for epoch in range(iteration_num): train(epoch, train_db) test(epoch, test_db)if __name__ == "__main__": main()

输出结果

Model: "sequential" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= dense (Dense) (None, 256) 200960 _________________________________________________________________ dense_1 (Dense) (None, 128) 32896 _________________________________________________________________ dense_2 (Dense) (None, 64) 8256 _________________________________________________________________ dense_3 (Dense) (None, 32) 2080 _________________________________________________________________ dense_4 (Dense) (None, 10) 330 ================================================================= Total params: 244,522 Trainable params: 244,522 Non-trainable params: 0 _________________________________________________________________ None 2021-06-14 18:01:27.399812: I tensorflow/compiler/mlir/mlir_graph_optimization_pass.cc:176] None of the MLIR Optimization Passes are enabled (registered 2) step: 0 Cross_Entropy: 591.5974 step: 100 Cross_Entropy: 196.49309 step: 200 Cross_Entropy: 125.2562 epoch: 1 Accuracy: 84.72999930381775 % step: 0 Cross_Entropy: 107.64579 step: 100 Cross_Entropy: 105.854385 step: 200 Cross_Entropy: 99.545975 epoch: 2 Accuracy: 85.83999872207642 % step: 0 Cross_Entropy: 95.42945 step: 100 Cross_Entropy: 91.366234 step: 200 Cross_Entropy: 90.84072 epoch: 3 Accuracy: 86.69999837875366 % step: 0 Cross_Entropy: 82.03317 step: 100 Cross_Entropy: 83.20552 step: 200 Cross_Entropy: 81.57012 epoch: 4 Accuracy: 86.11000180244446 % step: 0 Cross_Entropy: 82.94046 step: 100 Cross_Entropy: 77.56677 step: 200 Cross_Entropy: 76.996346 epoch: 5 Accuracy: 87.27999925613403 % step: 0 Cross_Entropy: 75.59219 step: 100 Cross_Entropy: 71.70899 step: 200 Cross_Entropy: 74.15144 epoch: 6 Accuracy: 87.29000091552734 % step: 0 Cross_Entropy: 76.65844 step: 100 Cross_Entropy: 70.09151 step: 200 Cross_Entropy: 70.84446 epoch: 7 Accuracy: 88.27999830245972 % step: 0 Cross_Entropy: 67.50707 step: 100 Cross_Entropy: 64.85907 step: 200 Cross_Entropy: 68.63099 epoch: 8 Accuracy: 88.41999769210815 % step: 0 Cross_Entropy: 65.50318 step: 100 Cross_Entropy: 62.2706 step: 200 Cross_Entropy: 63.80803 epoch: 9 Accuracy: 86.21000051498413 % step: 0 Cross_Entropy: 66.95486 step: 100 Cross_Entropy: 61.84385 step: 200 Cross_Entropy: 62.18851 epoch: 10 Accuracy: 88.45999836921692 % step: 0 Cross_Entropy: 59.779297 step: 100 Cross_Entropy: 58.602314 step: 200 Cross_Entropy: 59.837025 epoch: 11 Accuracy: 88.66000175476074 % step: 0 Cross_Entropy: 58.10068 step: 100 Cross_Entropy: 55.097878 step: 200 Cross_Entropy: 59.906315 epoch: 12 Accuracy: 88.70999813079834 % step: 0 Cross_Entropy: 57.584858 step: 100 Cross_Entropy: 54.95376 step: 200 Cross_Entropy: 55.797752 epoch: 13 Accuracy: 88.44000101089478 % step: 0 Cross_Entropy: 53.54782 step: 100 Cross_Entropy: 53.62939 step: 200 Cross_Entropy: 54.632828 epoch: 14 Accuracy: 87.02999949455261 % step: 0 Cross_Entropy: 54.387398 step: 100 Cross_Entropy: 52.323734 step: 200 Cross_Entropy: 53.968185 epoch: 15 Accuracy: 88.98000121116638 % step: 0 Cross_Entropy: 50.468914 step: 100 Cross_Entropy: 50.79311 step: 200 Cross_Entropy: 51.296227 epoch: 16 Accuracy: 88.67999911308289 % step: 0 Cross_Entropy: 48.753258 step: 100 Cross_Entropy: 46.809692 step: 200 Cross_Entropy: 48.08208 epoch: 17 Accuracy: 89.10999894142151 % step: 0 Cross_Entropy: 46.830627 step: 100 Cross_Entropy: 47.208813 step: 200 Cross_Entropy: 48.671318 epoch: 18 Accuracy: 88.77999782562256 % step: 0 Cross_Entropy: 46.15514 step: 100 Cross_Entropy: 45.026627 step: 200 Cross_Entropy: 45.371685 epoch: 19 Accuracy: 88.7399971485138 % step: 0 Cross_Entropy: 47.696465 step: 100 Cross_Entropy: 41.52749 step: 200 Cross_Entropy: 46.71362 epoch: 20 Accuracy: 89.56000208854675 % 。

到此这篇关于一小时学会TensorFlow2之Fashion Mnist的文章就介绍到这了,更多相关TensorFlow2 Fashion Mnist内容请搜索我以前的文章或继续浏览下面的相关文章希望大家以后多多支持我! 。

原文链接:https://iamarookie.blog.csdn.net/article/details/117917350 。

最后此篇关于一小时学会TensorFlow2之Fashion Mnist的文章就讲到这里了,如果你想了解更多关于一小时学会TensorFlow2之Fashion Mnist的内容请搜索CFSDN的文章或继续浏览相关文章,希望大家以后支持我的博客! 。

我在学校一直在学习 C++,以创建小型命令行程序。 但是,我只使用 IDE 构建了我的项目,包括 VS08 和 QtCreator。 我了解构建项目背后的过程:将源代码编译为目标代码,然后将它们链接到

一个实现“时尚”大型输入文本框的好例子,就像在 google 上找到的那样。和 tumblr ? 在 tumblr 上,他们如何使用 manage 让输入从光标所在的位置向后流动 - 在 URL 的最

在开发 python 代码时,我使用包 ipdb。 这会停止那里的 python 代码的执行,我在其中插入了 ipdb.set_trace(),并向我显示了一个 python 解释器命令行。 但是,在

我正在阅读 Apache Crunch documentation我发现了以下句子: Data is read in from the filesystem in a streaming fashio

在你问之前,是的,我在这个问题上搜索了又搜索,尝试了其他人为他们所做的工作,但一无所获。我试过: 以 Release模式运行 在 LocalSystem、LocalService 和命名帐户上运行 我

我正在尝试为我在大学上的机器学习类(class)做这个教程。 www.tensorflow.org/tutorials/keras/basic_classification 当它执行这些行时 fas

svn:externals 非常适合将中央库或 IP 引入项目中,这样它们就可以保存在一个可供所有人访问的位置。 但是,如果我要求人们使用公共(public) IP 的外部标签(因此它不会对他们进行更

我有一个表,其中包含 3 个字段: ID 姓名 状态 每次我得到结果时,它应该给我 5 个状态 = 1 的名字。假设数据库包含以下内容: id name status 1 A 1 2 B

建立一个最终将成为 Facebook 游戏的网站(希望如此!),我有点卡住了。我提供了一张图片来更好地解释。 我已经创建了一个 DIV,背景是图像(减去我非常专业的涂鸦)并且位于 DIV 之上的是其他

这是我昨天在 Google Apps Script Office Hours Hangout 上提出的一个问题的后续。 我的最终脚本的目标是在我工作的高中使用 Google 表单创建学生选举的选举流程

我有一个顶部 div,它有 position: fixed。在 div 之后,我有一个菜单 div 也有 position: fixed,它将被定位为与顶部 div 重叠,因此它需要一个正确的 z-i

我有一个格式如下的数组,其中第一个参数是要显示的文本,第二个是列号,第三个是行号。我想根据它们的列和行位置显示这些元素。 [[ "a", "0", "0"], ["b", "0", "1"], ["

背景:一位客户给了我一个第三方开发的 Windows 服务,他们希望我为他们运行该服务。但是,当我启动该服务时,它超时并且出现 1053“服务未响应......及时”错误。 我已经反射程序集以在其启动

DI 创建了一个额外的抽象层,因此如果您的实现类发生变化,您可以简单地插入具有相同接口(interface)的不同类。 但是,当您想使用不同的实现类时,为什么不简单地进行重构呢?其他语言(如 Pyth

I have found the information on how to navigate the Vim window splits using hjkl. But does there

I have found the information on how to navigate the Vim window splits using hjkl. But does there

我研究了好久了,还是没明白。我应该进行逆序遍历(右-根-左)并将根的级别传递给函数 ShowTree。根到底是什么级别?是高度吗?如果是,这是它的代码: public int getHeight()

我有以下代码:。如果我在本地主机上--例如,http://localhost:8501/ping--在同一个浏览器窗口的不同选项卡中运行我的代码,我会得到:。不是:。我读到过关于使用HTTPX的文章,

我正在尝试将 ecs 集群从一个堆栈传递到另一个堆栈。 我收到此错误:错误:解析错误:解析错误:解析错误:无法以跨环境方式使用资源“BackendAPIStack/BackendAPICluster”

几天以来,我一直在寻找解决方案,但没有成功。 我们有一个 Windows 服务构建来将一些文件从一个位置复制到另一个位置。 所以我用 Python 3.7 构建了如下所示的代码。 完整的编码可以在 G

我是一名优秀的程序员,十分优秀!