- Java锁的逻辑(结合对象头和ObjectMonitor)

- 还在用饼状图?来瞧瞧这些炫酷的百分比可视化新图形(附代码实现)⛵

- 自动注册实体类到EntityFrameworkCore上下文,并适配ABP及ABPVNext

- 基于Sklearn机器学习代码实战

https://blog.csdn.net/weixin_44911081/article/details/121227024?ops_request_misc=%257B%2522request%255Fid%2522%253A%2522163695916016780269859534%2522%252C%2522scm%2522%253A%252220140713.130102334.pc%255Fblog.%2522%257D&request_id=163695916016780269859534&biz_id=0&utm_medium=distribute.pc_search_result.none-task-blog-2~blog~first_rank_v2~rank_v29-3-121227024.pc_v2_rank_blog_default&utm_term=hive&spm=1018.2226.3001.4450 。

https://blog.csdn.net/weixin_55821558/article/details/125830542 。

https://blog.csdn.net/a12355556/article/details/124565395 。

https://www.freesion.com/article/8763708397/ 。

原生: http://archive.apache.org/dist/hive/hive-1.1.0/ 。

CDH版本(已失效):https://archive.cloudera.com/p/cdh5/cdh/5 注意:登录名为邮箱,密码大小写数字+符号.

命令下载(已失效):wget https://archive.cloudera.com/cdh5/cdh/5/hive-1.1.0-cdh5.14.2.tar.gz 。

CDH5网盘备份:链接:https://pan.baidu.com/s/1XUGRMpjTbrJWDy9QCT9vTw?pwd=gmyf 。

比较:CDH版本比原生的兼容性更强,下载哪个都可以 。

vi hive_insatll.sh 。

echo "----------安装hive----------"

#-C 指定目录

tar -zxf /usr/local/hive-1.1.0-cdh5.14.2.tar.gz -C /usr/local/

#改名

mv /usr/local/hive-1.1.0-cdh5.14.2 /usr/local/hive110

#配置环境变量

echo '#hive' >>/etc/profile

echo 'export HIVE_HOME=/usr/local/hive110' >>/etc/profile

echo 'export PATH=$PATH:$HIVE_HOME/bin' >>/etc/profile

#创建配置文件hive-site.xml

touch /usr/local/hive110/conf/hive-site.xml

path="/usr/local/hive110/conf/hive-site.xml"

#编写配置

echo '<?xml version="1.0" encoding="UTF-8" standalone="no"?>' >> $path

echo '<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>' >> $path

echo '<configuration>' >> $path

#和jdbc如出一辙,更换自己的ip地址和用户名密码即可

echo '<property><name>javax.jdo.option.ConnectionURL</name><value>jdbc:mysql://192.168.91.137:3306/hive137?createDatabaseIfNotExist=true</value></property>' >> $path

echo '<property><name>javax.jdo.option.ConnectionDriverName</name><value>com.mysql.jdbc.Driver</value></property>' >> $path

echo '<property><name>javax.jdo.option.ConnectionUserName</name><value>root</value></property>' >> $path

echo '<property><name>javax.jdo.option.ConnectionPassword</name><value>123123</value></property>' >> $path

echo '<property><name>hive.server2.thift.client.user</name><value>root</value></property>' >> $path

echo '<property><name>hive.server2.thift.client.password</name><value>123123</value></property>' >> $path

echo '</configuration>' >>$path

添加执行权限:chmod u+x hive_insatll.sh 。

执行.sh文件:./hive_insatll.sh 或 sh hive_insatll.sh 。

source /etc/profile 。

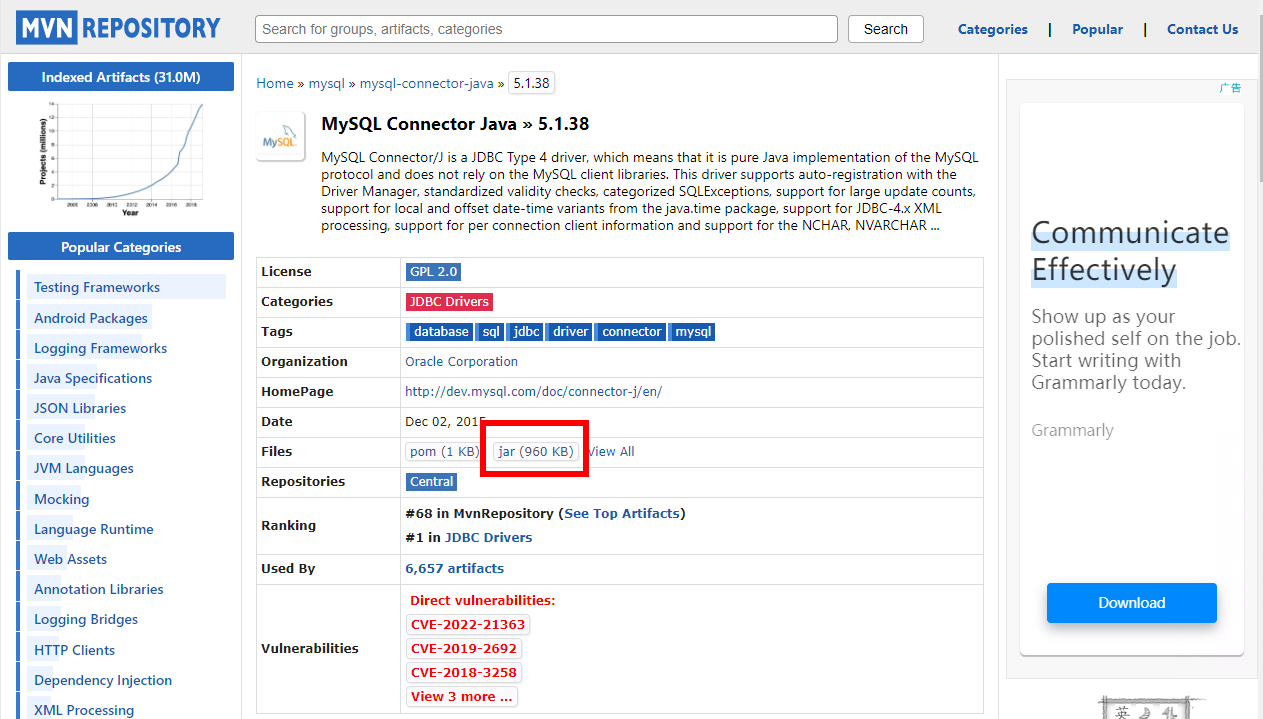

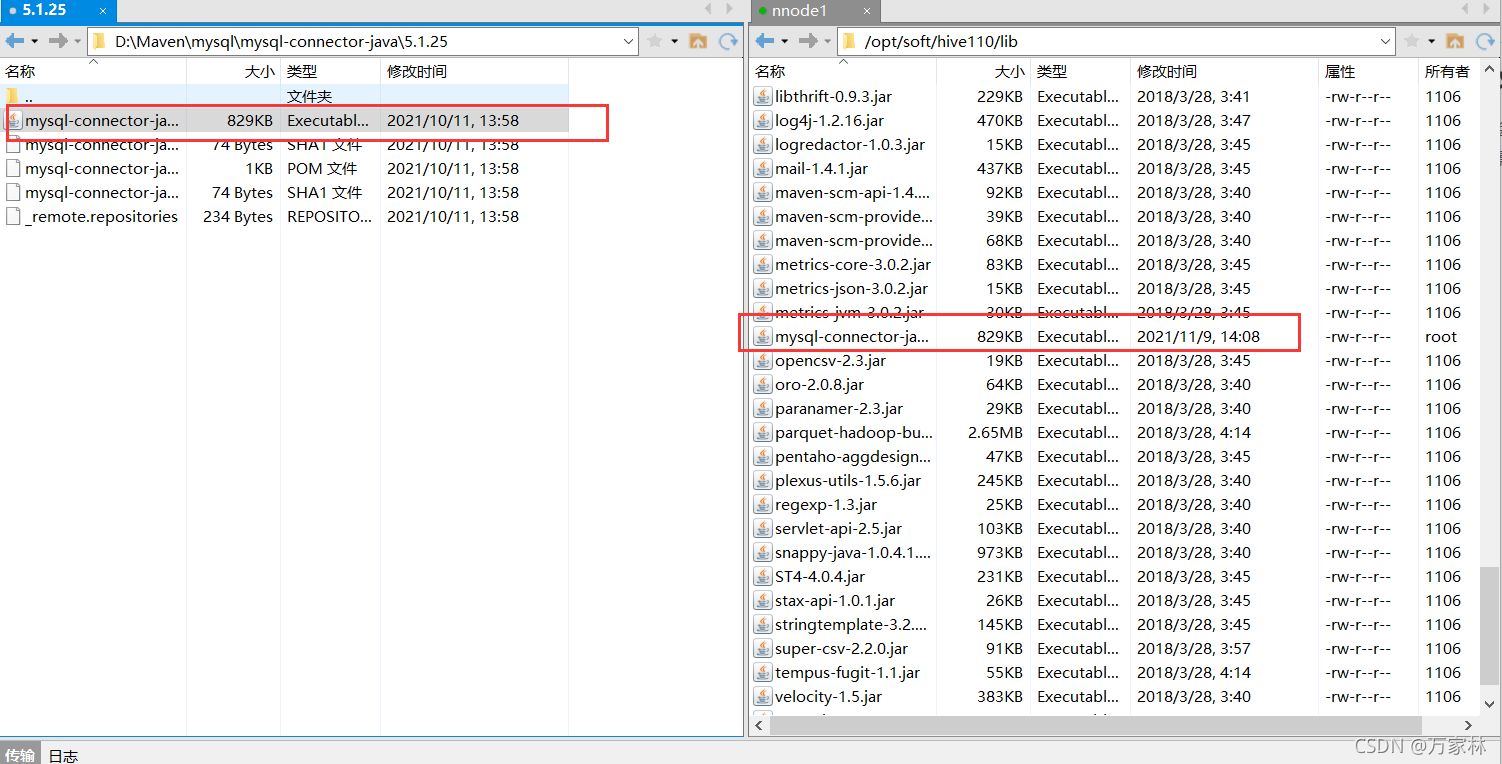

下载地址: https://mvnrepository.com/artifact/mysql/mysql-connector-java/5.1.38 。

其他jar包:mysql-binlog-connector-java、 eventuate-local-java-cdc-connector-mysql-binlog…… 。

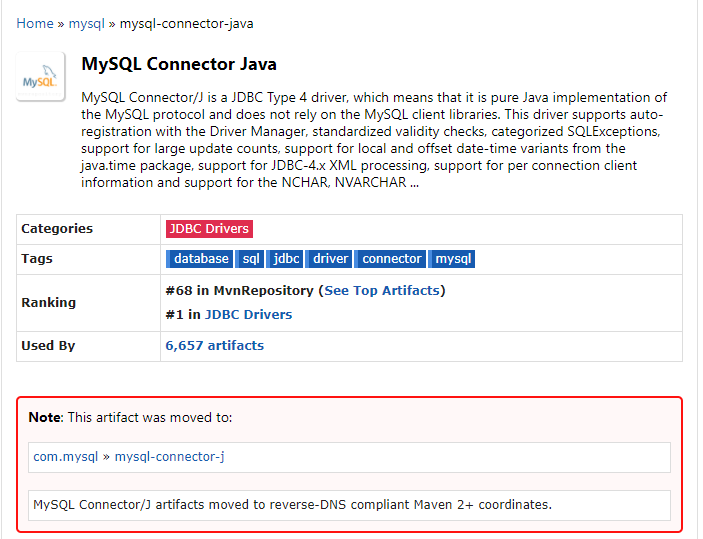

注意:已经转至新目录 。

schematool -dbType mysql -initSchema 。

前台启动:hive --service hiveserver2 。

后台启动:nohup hive --service hiveserver2 2>&1 & 。

组合使用: nohup [xxx 命令操作]> file 2>&1 &,表示将 xxx 命令运行的结 果输出到 file 中(第一个2表示错误输出,另外0表示标准输入,1表示标准输出) 。

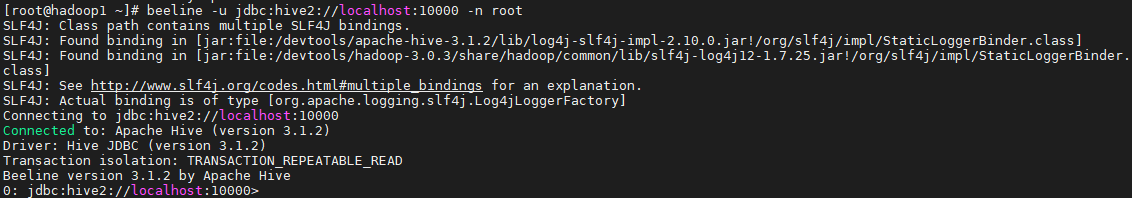

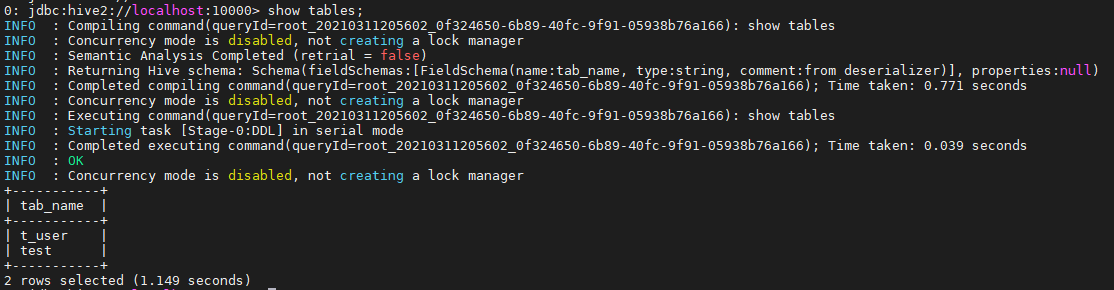

连接:beeline -u jdbc:hive2://localhost:10000 -n root 。

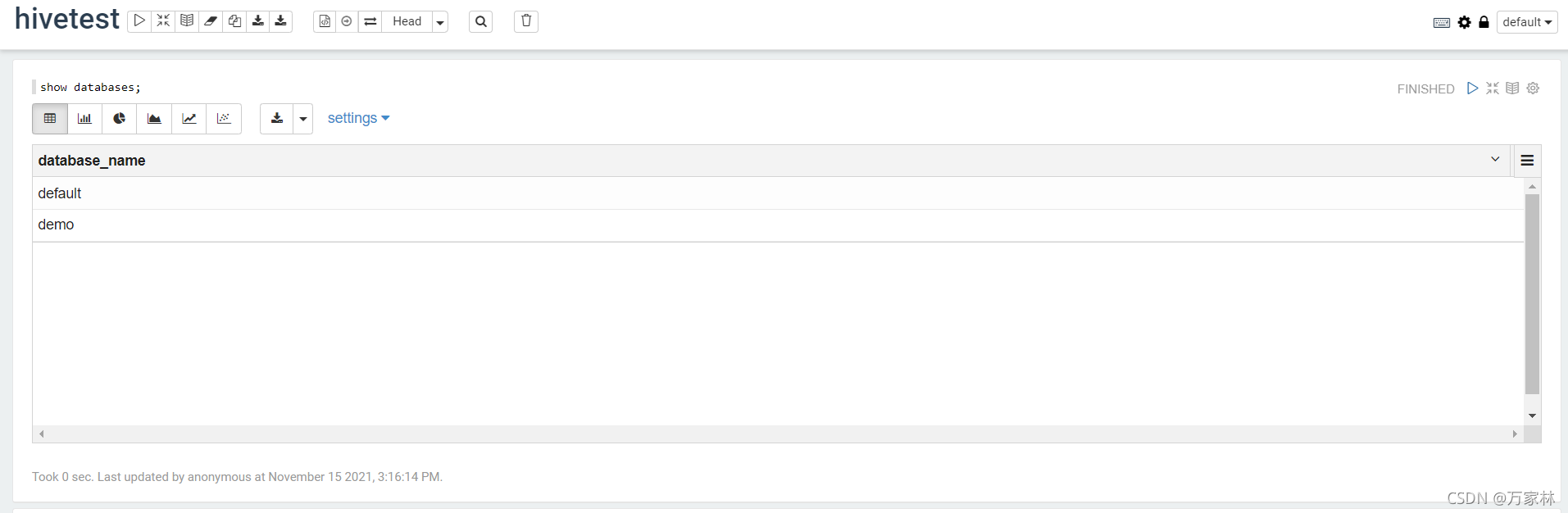

执行语句:show databases,

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-jdbc</artifactId>

<version>1.1.0</version>

<exclusions>

<exclusion>

<groupId>org.eclipse.jetty.aggregate</groupId>

<artifactId>jetty-all</artifactId>

</exclusion>

<exclusion>

<groupId>org.apache.hive</groupId>

<artifactId>hive-shims</artifactId>

</exclusion>

</exclusions>

</dependency>

import java.sql.SQLException;

import java.sql.Connection;

import java.sql.ResultSet;

import java.sql.Statement;

import java.sql.DriverManager;

public class HiveAPITest {

private static String driverName = "org.apache.hive.jdbc.HiveDriver";

public static void main(String[] args) throws SQLException {

try {

Class.forName(driverName);

} catch (ClassNotFoundException e) {

// TODO Auto-generated catch block

e.printStackTrace();

System.exit(1);

}

//replace "hive" here with the name of the user the queries should run as

Connection con = DriverManager.getConnection("jdbc:hive2://localhost:10000/default",

"hive", "");

Statement stmt = con.createStatement();

String tableName = "testHiveDriverTable";

stmt.execute("drop table if exists " + tableName);

stmt.execute("create table " + tableName + " (key int, value string) row format delimited fields terminated by '\t'");

// show tables

String sql = "show tables '" + tableName + "'";

System.out.println("Running: " + sql);

ResultSet res = stmt.executeQuery(sql);

if (res.next()) {

System.out.println(res.getString(1));

}

// describe table

sql = "describe " + tableName;

System.out.println("Running: " + sql);

res = stmt.executeQuery(sql);

while (res.next()) {

System.out.println(res.getString(1) + "\t" + res.getString(2));

}

// load data into table

// NOTE: filepath has to be local to the hive server

// NOTE: /opt/tmp/a.txt is a \t separated file with two fields per line

String filepath = "/opt/tmp/a.txt";

sql = "load data local inpath '" + filepath + "' into table " + tableName;

System.out.println("Running: " + sql);

stmt.execute(sql);

// select * query

sql = "select * from " + tableName;

System.out.println("Running: " + sql);

res = stmt.executeQuery(sql);

while (res.next()) {

System.out.println(String.valueOf(res.getInt(1)) + "\t" + res.getString(2));

}

// regular hive query

sql = "select count(1) from " + tableName;

System.out.println("Running: " + sql);

res = stmt.executeQuery(sql);

while (res.next()) {

System.out.println(res.getString(1));

}

} }

最后此篇关于通过Shell脚本自动安装Hive&JDBC测试&提供CDH5网盘地址的文章就讲到这里了,如果你想了解更多关于通过Shell脚本自动安装Hive&JDBC测试&提供CDH5网盘地址的内容请搜索CFSDN的文章或继续浏览相关文章,希望大家以后支持我的博客! 。

目前,我有以下设置: A记录: mydomain.com - aaa.aaa.aaa.aaa subdomain.mydomain.com - aaa.aaa.aaa.aaa NS记录: mydoma

有人可以帮助我以最佳方式在流畅的 nHibernate 中映射以下情况吗? Address 类用于 Client 和 Company。如何在 SQL 中最有效地存储它?映射应该是什么样的?我已经考虑过

我正在尝试编写一个 Windows 应用程序,它将在来自 PC 的以太网链接上生成流量。 我想使用 webBrowser 控件不断拉取网页以产生流量。 在这种情况下,我希望每个 webBrowser

我正在编写一个 SIP 堆栈,我需要在消息中插入一个 IP 地址。该地址必须是用于发送消息的地址。我知道目标 IP 并且需要确定将用于发送消息的 NIC(其地址).... 最佳答案 为了扩展 Remy

如何使用 IP 地址获取 MAC 地址,但以下代码不起作用 packet = ARP(op=ARP.who_has,psrc="some ip",pdst = ip) response = srp(p

目前我想知道如何实现对本地无线网络(路由器)的获取请求以获取当前连接到当前连接的 LAN 的所有设备.... 所以我做了一些研究,显然“nmap”是一个终端/命令提示符命令,它将连接的设备返回到本地无

这个问题在这里已经有了答案: 关闭 11 年前。 Possible Duplicates: how to find MAC address in MAC OS X programmatically

我们正在为 ipad 开发一个 iOS 应用程序,它使用 bonjour 与其他设备连接,使用 couchbaseListener 与对等数据库进行复制。我们观察到,每当 [nsnetservice

我创建了 3 个实例,其中 3 个弹性 IP 地址指向这些实例。 我做了 dsc 的 yum 安装:dsc12.noarch 1.2.13-1 @datastax 并且/etc/cassandra/d

我正在尝试获取规模集中所有虚拟机的私有(private) IP 地址列表(没有一个虚拟机故意拥有任何公共(public) IP 地址)。我找到了如何从 az cli 获取此内容,如下所示: az vm

我正在尝试获取规模集中所有虚拟机的私有(private) IP 地址列表(没有一个虚拟机故意拥有任何公共(public) IP 地址)。我找到了如何从 az cli 获取此内容,如下所示: az vm

我正在尝试与该端口上的任何 IP 建立连接。最初,我将其设置为 10.0.0.7,这是我网络上另一台计算机的 IP,因此我可以测试客户端/服务器。但是,我希望它可以与任何计算机一起使用而不必将 IP

作为序言,我开发了自己的 CRM(类似于 SalesForce 或 SAP),其“规模”要小得多,因为它面向服务,而不是销售。我在 Ubuntu 16.04 服务器上使用 MySql 或 MariaD

在我的项目中,我想做如下事情: static void test0(void) { printf("%s [%d]\n", __func__, __LINE__); } static void

我的机器上有两个网卡,配置了两个独立的 IP 地址。两个 IP 地址都属于同一个网络。我是否正确地说,当我创建一个特定于这些 IP 地址之一的套接字时? 更新: 这是我的情况: 我有一个位于 192.

当然,我意识到没有一种“正确的方法”来设计 SQL 数据库,但我想就我的特定场景中的优劣获得一些意见。 目前,我正在设计一个订单输入模块(带有 SQL Server 2008 的 Windows .N

我们将保存大量地址数据(在我公司的眼中,每个客户大约有150.000至500.000行)。 地址数据包含约5列: 名称1 名称2 街(+否) 邮政编码 市 也许以后再添加一些东西(例如电话,邮件等)

好的,我们在生产中实现了 Recaptcha。我们收到错误是因为它无法到达使用该服务所需的 IP 地址。我们为 IP 地址打开一个端口以到达 Google。没问题。我们这样做并显式配置该 IP 地址以

此页面使用 Drupals 联系表发送电子邮件:http://www.westlake.school.nz/contact 问题是,学校员工使用 outlook。当他们收到来自 parent 等的电子

是否可以将用户输入的邮政编码转换为文本框并将其转换为CLLocation?我正在尝试比较其当前位置与地址或邮政编码之间的距离,如果可以从NSString中创建CLLocation,这将很容易。 最佳答

我是一名优秀的程序员,十分优秀!